:

YOLOV5 PTQ,QAT量化源码

资源介绍:

YOLOV5 PTQ,QAT量化源码

English | [ç®ä½ä¸æ](.github/README_cn.md)

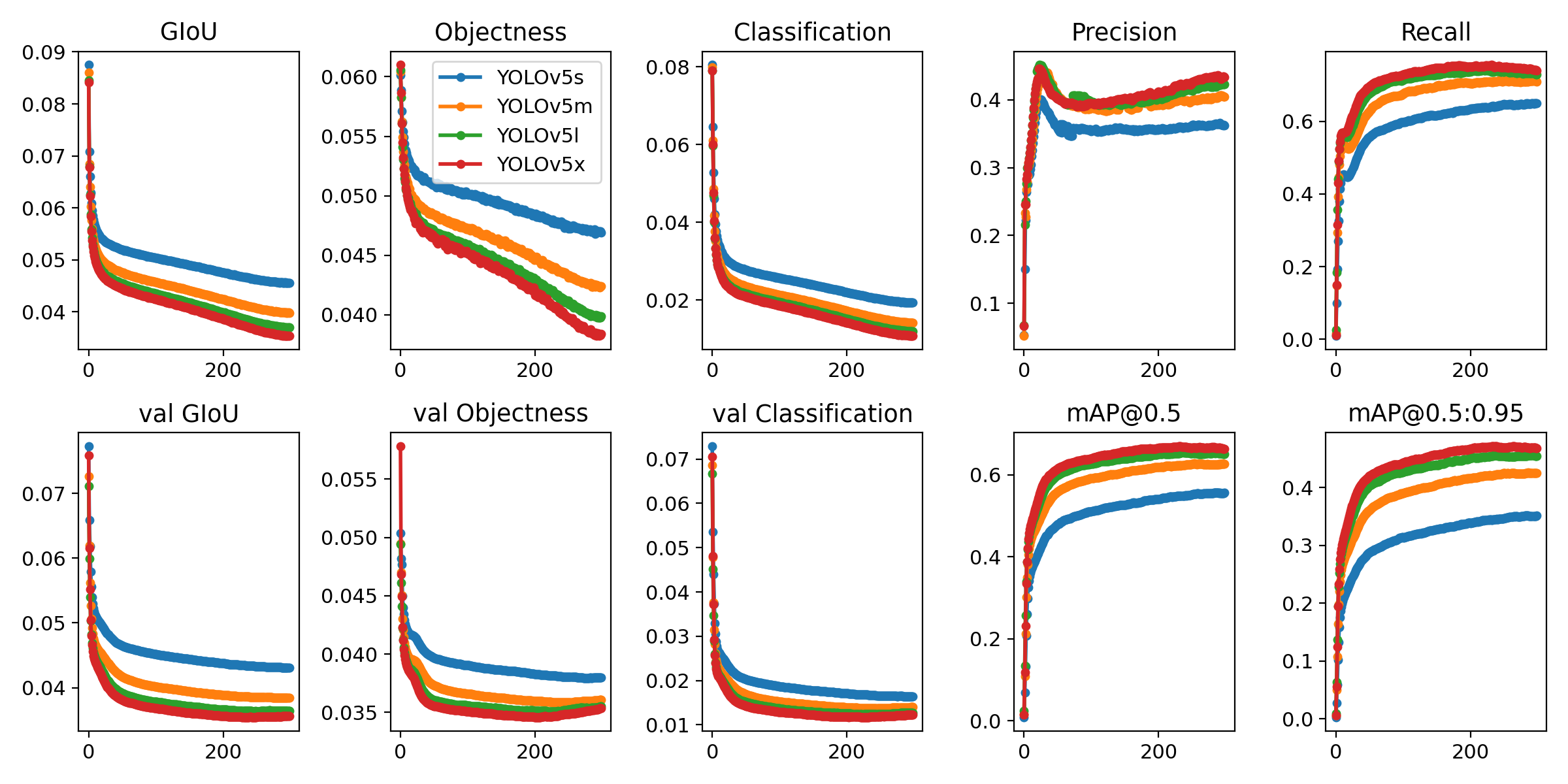

## YOLOv5 ð is a family of object detection architectures and models pretrained on the COCO dataset, and represents Ultralytics open-source research into future vision AI methods, incorporating lessons learned and best practices evolved over thousands of hours of research and development.

Documentation

See the [YOLOv5 Docs](https://docs.ultralytics.com) for full documentation on training, testing and deployment.

## Quick Start Examples

Install

Clone repo and install [requirements.txt](https://github.com/ultralytics/yolov5/blob/master/requirements.txt) in a [**Python>=3.7.0**](https://www.python.org/) environment, including [**PyTorch>=1.7**](https://pytorch.org/get-started/locally/). ```bash git clone https://github.com/ultralytics/yolov5 # clone cd yolov5 pip install -r requirements.txt # install ```Inference

YOLOv5 [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) inference. [Models](https://github.com/ultralytics/yolov5/tree/master/models) download automatically from the latest YOLOv5 [release](https://github.com/ultralytics/yolov5/releases). ```python import torch # Model model = torch.hub.load('ultralytics/yolov5', 'yolov5s') # or yolov5n - yolov5x6, custom # Images img = 'https://ultralytics.com/images/zidane.jpg' # or file, Path, PIL, OpenCV, numpy, list # Inference results = model(img) # Results results.print() # or .show(), .save(), .crop(), .pandas(), etc. ```Inference with detect.py

`detect.py` runs inference on a variety of sources, downloading [models](https://github.com/ultralytics/yolov5/tree/master/models) automatically from the latest YOLOv5 [release](https://github.com/ultralytics/yolov5/releases) and saving results to `runs/detect`. ```bash python detect.py --source 0 # webcam img.jpg # image vid.mp4 # video path/ # directory 'path/*.jpg' # glob 'https://youtu.be/Zgi9g1ksQHc' # YouTube 'rtsp://example.com/media.mp4' # RTSP, RTMP, HTTP stream ```Training

The commands below reproduce YOLOv5 [COCO](https://github.com/ultralytics/yolov5/blob/master/data/scripts/get_coco.sh) results. [Models](https://github.com/ultralytics/yolov5/tree/master/models) and [datasets](https://github.com/ultralytics/yolov5/tree/master/data) download automatically from the latest YOLOv5 [release](https://github.com/ultralytics/yolov5/releases). Training times for YOLOv5n/s/m/l/x are 1/2/4/6/8 days on a V100 GPU ([Multi-GPU](https://github.com/ultralytics/yolov5/issues/475) times faster). Use the largest `--batch-size` possible, or pass `--batch-size -1` for YOLOv5 [AutoBatch](https://github.com/ultralytics/yolov5/pull/5092). Batch sizes shown for V100-16GB. ```bash python train.py --data coco.yaml --cfg yolov5n.yaml --weights '' --batch-size 128 yolov5s 64 yolov5m 40 yolov5l 24 yolov5x 16 ```

Tutorials

- [Train Custom Data](https://github.com/ultralytics/yolov5/wiki/Train-Custom-Data) ð RECOMMENDED - [Tips for Best Training Results](https://github.com/ultralytics/yolov5/wiki/Tips-for-Best-Training-Results) âï¸ RECOMMENDED - [Multi-GPU Training](https://github.com/ultralytics/yolov5/issues/475) - [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) ð NEW - [TFLite, ONNX, CoreML, TensorRT Export](https://github.com/ultralytics/yolov5/issues/251) ð - [Test-Time Augmentation (TTA)](https://github.com/ultralytics/yolov5/issues/303) - [Model Ensembling](https://github.com/ultralytics/yolov5/issues/318) - [Model Pruning/Sparsity](https://github.com/ultralytics/yolov5/issues/304) - [Hyperpar资源文件列表:

yolov5_quant/

yolov5_quant/ yolov5_quant/.dockerignore 3.61KB

yolov5_quant/.dockerignore 3.61KB

yolov5_quant/.gitattributes 75B

yolov5_quant/.gitattributes 75B

yolov5_quant/.github/

yolov5_quant/.github/ yolov5_quant/.github/CODE_OF_CONDUCT.md 5.11KB

yolov5_quant/.github/CODE_OF_CONDUCT.md 5.11KB

yolov5_quant/.github/ISSUE_TEMPLATE/

yolov5_quant/.github/ISSUE_TEMPLATE/ yolov5_quant/.github/ISSUE_TEMPLATE/bug-report.yml 2.87KB

yolov5_quant/.github/ISSUE_TEMPLATE/bug-report.yml 2.87KB

yolov5_quant/.github/ISSUE_TEMPLATE/config.yml 280B

yolov5_quant/.github/ISSUE_TEMPLATE/config.yml 280B

yolov5_quant/.github/ISSUE_TEMPLATE/feature-request.yml 1.76KB

yolov5_quant/.github/ISSUE_TEMPLATE/feature-request.yml 1.76KB

yolov5_quant/.github/ISSUE_TEMPLATE/question.yml 1.12KB

yolov5_quant/.github/ISSUE_TEMPLATE/question.yml 1.12KB

yolov5_quant/.github/PULL_REQUEST_TEMPLATE.md 693B

yolov5_quant/.github/PULL_REQUEST_TEMPLATE.md 693B

yolov5_quant/.github/README_cn.md 28.19KB

yolov5_quant/.github/README_cn.md 28.19KB

yolov5_quant/.github/SECURITY.md 359B

yolov5_quant/.github/SECURITY.md 359B

yolov5_quant/.github/dependabot.yml 441B

yolov5_quant/.github/dependabot.yml 441B

yolov5_quant/.github/workflows/

yolov5_quant/.github/workflows/ yolov5_quant/.github/workflows/ci-testing.yml 5.48KB

yolov5_quant/.github/workflows/ci-testing.yml 5.48KB

yolov5_quant/.github/workflows/codeql-analysis.yml 2KB

yolov5_quant/.github/workflows/codeql-analysis.yml 2KB

yolov5_quant/.github/workflows/docker.yml 1.44KB

yolov5_quant/.github/workflows/docker.yml 1.44KB

yolov5_quant/.github/workflows/greetings.yml 5.02KB

yolov5_quant/.github/workflows/greetings.yml 5.02KB

yolov5_quant/.github/workflows/rebase.yml 639B

yolov5_quant/.github/workflows/rebase.yml 639B

yolov5_quant/.github/workflows/stale.yml 1.97KB

yolov5_quant/.github/workflows/stale.yml 1.97KB

yolov5_quant/.gitignore 3.89KB

yolov5_quant/.gitignore 3.89KB

yolov5_quant/.idea/

yolov5_quant/.idea/ yolov5_quant/.idea/.gitignore 50B

yolov5_quant/.idea/.gitignore 50B

yolov5_quant/.idea/inspectionProfiles/

yolov5_quant/.idea/inspectionProfiles/ yolov5_quant/.idea/inspectionProfiles/Project_Default.xml 2.92KB

yolov5_quant/.idea/inspectionProfiles/Project_Default.xml 2.92KB

yolov5_quant/.idea/inspectionProfiles/profiles_settings.xml 174B

yolov5_quant/.idea/inspectionProfiles/profiles_settings.xml 174B

yolov5_quant/.idea/misc.xml 199B

yolov5_quant/.idea/misc.xml 199B

yolov5_quant/.idea/modules.xml 279B

yolov5_quant/.idea/modules.xml 279B

yolov5_quant/.idea/workspace.xml 6.26KB

yolov5_quant/.idea/workspace.xml 6.26KB

yolov5_quant/.idea/yolov5-6.2.iml 566B

yolov5_quant/.idea/yolov5-6.2.iml 566B

yolov5_quant/.pre-commit-config.yaml 1.52KB

yolov5_quant/.pre-commit-config.yaml 1.52KB

yolov5_quant/CONTRIBUTING.md 4.85KB

yolov5_quant/CONTRIBUTING.md 4.85KB

yolov5_quant/LICENSE 34.3KB

yolov5_quant/LICENSE 34.3KB

yolov5_quant/PTQ量化代码阅读/

yolov5_quant/PTQ量化代码阅读/ yolov5_quant/PTQ量化代码阅读/calibrate_model.png 321.42KB

yolov5_quant/PTQ量化代码阅读/calibrate_model.png 321.42KB

yolov5_quant/PTQ量化代码阅读/collect_stats.png 296.47KB

yolov5_quant/PTQ量化代码阅读/collect_stats.png 296.47KB

yolov5_quant/PTQ量化代码阅读/compute_amax.png 151.97KB

yolov5_quant/PTQ量化代码阅读/compute_amax.png 151.97KB

yolov5_quant/PTQ量化代码阅读/evaluate_accuracy.png 213.93KB

yolov5_quant/PTQ量化代码阅读/evaluate_accuracy.png 213.93KB

yolov5_quant/PTQ量化代码阅读/prepare_model.png 304.8KB

yolov5_quant/PTQ量化代码阅读/prepare_model.png 304.8KB

yolov5_quant/PTQ量化代码阅读/sensitive_analysis.png 370.49KB

yolov5_quant/PTQ量化代码阅读/sensitive_analysis.png 370.49KB

yolov5_quant/PTQ量化调试截图/

yolov5_quant/PTQ量化调试截图/ yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-28-06.png 105.08KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-28-06.png 105.08KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-28-54.png 37.41KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-28-54.png 37.41KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-31-14.png 147.63KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-31-14.png 147.63KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-33-13.png 51.24KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-33-13.png 51.24KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-33-55.png 83.76KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-33-55.png 83.76KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-34-55.png 106.71KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-34-55.png 106.71KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-36-12.png 61.97KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-36-12.png 61.97KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-36-59.png 97.65KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-36-59.png 97.65KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-38-27.png 75.21KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-38-27.png 75.21KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-38-54.png 59.91KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-38-54.png 59.91KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-43-30.png 44.46KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-43-30.png 44.46KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-49-05.png 119.97KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-49-05.png 119.97KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-50-28.png 121.31KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_10-50-28.png 121.31KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_12-18-27.png 107.67KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_12-18-27.png 107.67KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_12-31-06.png 128.4KB

yolov5_quant/PTQ量化调试截图/Snipaste_2024-01-17_12-31-06.png 128.4KB

yolov5_quant/PTQ量化调试截图/_DEFAULT_QUANT_MAP.png 116.77KB

yolov5_quant/PTQ量化调试截图/_DEFAULT_QUANT_MAP.png 116.77KB

yolov5_quant/PTQ量化调试截图/检测头.png 160.19KB

yolov5_quant/PTQ量化调试截图/检测头.png 160.19KB

yolov5_quant/QAT量化代码阅读/

yolov5_quant/QAT量化代码阅读/ yolov5_quant/QAT量化代码阅读/select_layers_for_finetuning.png 324.54KB

yolov5_quant/QAT量化代码阅读/select_layers_for_finetuning.png 324.54KB

yolov5_quant/QAT量化代码阅读/train.png 370.01KB

yolov5_quant/QAT量化代码阅读/train.png 370.01KB

yolov5_quant/QAT量化调试截图/

yolov5_quant/QAT量化调试截图/ yolov5_quant/QAT量化调试截图/Snipaste_2024-01-21_15-14-58.png 77.03KB

yolov5_quant/QAT量化调试截图/Snipaste_2024-01-21_15-14-58.png 77.03KB

yolov5_quant/QAT量化调试截图/Snipaste_2024-01-21_15-21-55.png 92.31KB

yolov5_quant/QAT量化调试截图/Snipaste_2024-01-21_15-21-55.png 92.31KB

yolov5_quant/QAT量化调试截图/Snipaste_2024-01-21_16-57-17.png 121.57KB

yolov5_quant/QAT量化调试截图/Snipaste_2024-01-21_16-57-17.png 121.57KB

yolov5_quant/QAT量化调试截图/keep_idx.png 191KB

yolov5_quant/QAT量化调试截图/keep_idx.png 191KB

yolov5_quant/QAT量化调试截图/train_layers.png 95.71KB

yolov5_quant/QAT量化调试截图/train_layers.png 95.71KB

yolov5_quant/README.md 28.92KB

yolov5_quant/README.md 28.92KB

yolov5_quant/__pycache__/

yolov5_quant/__pycache__/ yolov5_quant/__pycache__/py_quant_utils.cpython-38.pyc 4.71KB

yolov5_quant/__pycache__/py_quant_utils.cpython-38.pyc 4.71KB

yolov5_quant/__pycache__/quant_flow_ptq_int8.cpython-38.pyc 8.92KB

yolov5_quant/__pycache__/quant_flow_ptq_int8.cpython-38.pyc 8.92KB

yolov5_quant/__pycache__/val.cpython-38.pyc 13.43KB

yolov5_quant/__pycache__/val.cpython-38.pyc 13.43KB

yolov5_quant/classify/

yolov5_quant/classify/ yolov5_quant/classify/predict.py 3.94KB

yolov5_quant/classify/predict.py 3.94KB

yolov5_quant/classify/train.py 15.4KB

yolov5_quant/classify/train.py 15.4KB

yolov5_quant/classify/val.py 6.78KB

yolov5_quant/classify/val.py 6.78KB

yolov5_quant/coco128/

yolov5_quant/coco128/ yolov5_quant/coco128/images/

yolov5_quant/coco128/images/ yolov5_quant/coco128/images/train2017/

yolov5_quant/coco128/images/train2017/ yolov5_quant/coco128/images/train2017/000000000009.jpg 219.04KB

yolov5_quant/coco128/images/train2017/000000000009.jpg 219.04KB

yolov5_quant/coco128/images/train2017/000000000025.jpg 191.77KB

yolov5_quant/coco128/images/train2017/000000000025.jpg 191.77KB

yolov5_quant/coco128/images/train2017/000000000030.jpg 69.79KB

yolov5_quant/coco128/images/train2017/000000000030.jpg 69.79KB

yolov5_quant/coco128/images/train2017/000000000034.jpg 396.5KB

yolov5_quant/coco128/images/train2017/000000000034.jpg 396.5KB

yolov5_quant/coco128/images/train2017/000000000036.jpg 254.11KB

yolov5_quant/coco128/images/train2017/000000000036.jpg 254.11KB

yolov5_quant/coco128/images/train2017/000000000042.jpg 208.31KB

yolov5_quant/coco128/images/train2017/000000000042.jpg 208.31KB

yolov5_quant/coco128/images/train2017/000000000049.jpg 121.7KB

yolov5_quant/coco128/images/train2017/000000000049.jpg 121.7KB

yolov5_quant/coco128/images/train2017/000000000061.jpg 390.96KB

yolov5_quant/coco128/images/train2017/000000000061.jpg 390.96KB

yolov5_quant/coco128/images/train2017/000000000064.jpg 215.69KB

yolov5_quant/coco128/images/train2017/000000000064.jpg 215.69KB

yolov5_quant/coco128/images/train2017/000000000071.jpg 209.17KB

yolov5_quant/coco128/images/train2017/000000000071.jpg 209.17KB

yolov5_quant/coco128/images/train2017/000000000072.jpg 233.51KB

yolov5_quant/coco128/images/train2017/000000000072.jpg 233.51KB

yolov5_quant/coco128/images/train2017/000000000073.jpg 374.66KB

yolov5_quant/coco128/images/train2017/000000000073.jpg 374.66KB

yolov5_quant/coco128/images/train2017/000000000074.jpg 172.02KB

yolov5_quant/coco128/images/train2017/000000000074.jpg 172.02KB

yolov5_quant/coco128/images/train2017/000000000077.jpg 155.48KB

yolov5_quant/coco128/images/train2017/000000000077.jpg 155.48KB

yolov5_quant/coco128/images/train2017/000000000078.jpg 204.79KB

yolov5_quant/coco128/images/train2017/000000000078.jpg 204.79KB

yolov5_quant/coco128/images/train2017/000000000081.jpg 110.61KB

yolov5_quant/coco128/images/train2017/000000000081.jpg 110.61KB

yolov5_quant/coco128/images/train2017/000000000086.jpg 187.72KB

yolov5_quant/coco128/images/train2017/000000000086.jpg 187.72KB

yolov5_quant/coco128/images/train2017/000000000089.jpg 159.79KB

yolov5_quant/coco128/images/train2017/000000000089.jpg 159.79KB

yolov5_quant/coco128/images/train2017/000000000092.jpg 72.19KB

yolov5_quant/coco128/images/train2017/000000000092.jpg 72.19KB

yolov5_quant/coco128/images/train2017/000000000094.jpg 219.91KB

yolov5_quant/coco128/images/train2017/000000000094.jpg 219.91KB

yolov5_quant/coco128/images/train2017/000000000109.jpg 228.53KB

yolov5_quant/coco128/images/train2017/000000000109.jpg 228.53KB

yolov5_quant/coco128/images/train2017/000000000110.jpg 196.12KB

yolov5_quant/coco128/images/train2017/000000000110.jpg 196.12KB

yolov5_quant/coco128/images/train2017/000000000113.jpg 250.36KB

yolov5_quant/coco128/images/train2017/000000000113.jpg 250.36KB

yolov5_quant/coco128/images/train2017/000000000127.jpg 200.65KB

yolov5_quant/coco128/images/train2017/000000000127.jpg 200.65KB

yolov5_quant/coco128/images/train2017/000000000133.jpg 159.77KB

yolov5_quant/coco128/images/train2017/000000000133.jpg 159.77KB

yolov5_quant/coco128/images/train2017/000000000136.jpg 102.62KB

yolov5_quant/coco128/images/train2017/000000000136.jpg 102.62KB

yolov5_quant/coco128/images/train2017/000000000138.jpg 229.31KB

yolov5_quant/coco128/images/train2017/000000000138.jpg 229.31KB

yolov5_quant/coco128/images/train2017/000000000142.jpg 105.62KB

yolov5_quant/coco128/images/train2017/000000000142.jpg 105.62KB

yolov5_quant/coco128/images/train2017/000000000143.jpg 58.16KB

yolov5_quant/coco128/images/train2017/000000000143.jpg 58.16KB

yolov5_quant/coco128/images/train2017/000000000144.jpg 220.37KB

yolov5_quant/coco128/images/train2017/000000000144.jpg 220.37KB

yolov5_quant/coco128/images/train2017/000000000149.jpg 69.5KB

yolov5_quant/coco128/images/train2017/000000000149.jpg 69.5KB

yolov5_quant/coco128/images/train2017/000000000151.jpg 373.74KB

yolov5_quant/coco128/images/train2017/000000000151.jpg 373.74KB

yolov5_quant/coco128/images/train2017/000000000154.jpg 138.54KB

yolov5_quant/coco128/images/train2017/000000000154.jpg 138.54KB

yolov5_quant/coco128/images/train2017/000000000164.jpg 157.48KB

yolov5_quant/coco128/images/train2017/000000000164.jpg 157.48KB

yolov5_quant/coco128/images/train2017/000000000165.jpg 223.86KB

yolov5_quant/coco128/images/train2017/000000000165.jpg 223.86KB

yolov5_quant/coco128/images/train2017/000000000192.jpg 225.02KB

yolov5_quant/coco128/images/train2017/000000000192.jpg 225.02KB

yolov5_quant/coco128/images/train2017/000000000194.jpg 192.28KB

yolov5_quant/coco128/images/train2017/000000000194.jpg 192.28KB

yolov5_quant/coco128/images/train2017/000000000196.jpg 155.22KB

yolov5_quant/coco128/images/train2017/000000000196.jpg 155.22KB

yolov5_quant/coco128/images/train2017/000000000201.jpg 155.56KB

yolov5_quant/coco128/images/train2017/000000000201.jpg 155.56KB

yolov5_quant/coco128/images/train2017/000000000208.jpg 169.91KB

yolov5_quant/coco128/images/train2017/000000000208.jpg 169.91KB

yolov5_quant/coco128/images/train2017/000000000241.jpg 105.19KB

yolov5_quant/coco128/images/train2017/000000000241.jpg 105.19KB

yolov5_quant/coco128/images/train2017/000000000247.jpg 154.54KB

yolov5_quant/coco128/images/train2017/000000000247.jpg 154.54KB

yolov5_quant/coco128/images/train2017/000000000250.jpg 110.33KB

yolov5_quant/coco128/images/train2017/000000000250.jpg 110.33KB

yolov5_quant/coco128/images/train2017/000000000257.jpg 203.87KB

yolov5_quant/coco128/images/train2017/000000000257.jpg 203.87KB

yolov5_quant/coco128/images/train2017/000000000260.jpg 92.77KB

yolov5_quant/coco128/images/train2017/000000000260.jpg 92.77KB

yolov5_quant/coco128/images/train2017/000000000263.jpg 219.18KB

yolov5_quant/coco128/images/train2017/000000000263.jpg 219.18KB

yolov5_quant/coco128/images/train2017/000000000283.jpg 143.39KB

yolov5_quant/coco128/images/train2017/000000000283.jpg 143.39KB

yolov5_quant/coco128/images/train2017/000000000294.jpg 73.86KB

yolov5_quant/coco128/images/train2017/000000000294.jpg 73.86KB

yolov5_quant/coco128/images/train2017/000000000307.jpg 291.53KB

yolov5_quant/coco128/images/train2017/000000000307.jpg 291.53KB

yolov5_quant/coco128/images/train2017/000000000308.jpg 135.09KB

yolov5_quant/coco128/images/train2017/000000000308.jpg 135.09KB

yolov5_quant/coco128/images/train2017/000000000309.jpg 330.13KB

yolov5_quant/coco128/images/train2017/000000000309.jpg 330.13KB

yolov5_quant/coco128/images/train2017/000000000312.jpg 292.54KB

yolov5_quant/coco128/images/train2017/000000000312.jpg 292.54KB

yolov5_quant/coco128/images/train2017/000000000315.jpg 180.06KB

yolov5_quant/coco128/images/train2017/000000000315.jpg 180.06KB

yolov5_quant/coco128/images/train2017/000000000321.jpg 173.42KB

yolov5_quant/coco128/images/train2017/000000000321.jpg 173.42KB

yolov5_quant/coco128/images/train2017/000000000322.jpg 96.73KB

yolov5_quant/coco128/images/train2017/000000000322.jpg 96.73KB

yolov5_quant/coco128/images/train2017/000000000326.jpg 53.74KB

yolov5_quant/coco128/images/train2017/000000000326.jpg 53.74KB

yolov5_quant/coco128/images/train2017/000000000328.jpg 155.97KB

yolov5_quant/coco128/images/train2017/000000000328.jpg 155.97KB

yolov5_quant/coco128/images/train2017/000000000332.jpg 118.6KB

yolov5_quant/coco128/images/train2017/000000000332.jpg 118.6KB

yolov5_quant/coco128/images/train2017/000000000338.jpg 79.8KB

yolov5_quant/coco128/images/train2017/000000000338.jpg 79.8KB

yolov5_quant/coco128/images/train2017/000000000349.jpg 67.45KB

yolov5_quant/coco128/images/train2017/000000000349.jpg 67.45KB

yolov5_quant/coco128/images/train2017/000000000357.jpg 128.38KB

yolov5_quant/coco128/images/train2017/000000000357.jpg 128.38KB

yolov5_quant/coco128/images/train2017/000000000359.jpg 51.18KB

yolov5_quant/coco128/images/train2017/000000000359.jpg 51.18KB

yolov5_quant/coco128/images/train2017/000000000360.jpg 115.85KB

yolov5_quant/coco128/images/train2017/000000000360.jpg 115.85KB

yolov5_quant/coco128/images/train2017/000000000368.jpg 340.98KB

yolov5_quant/coco128/images/train2017/000000000368.jpg 340.98KB

yolov5_quant/coco128/images/train2017/000000000370.jpg 82.41KB

yolov5_quant/coco128/images/train2017/000000000370.jpg 82.41KB

yolov5_quant/coco128/images/train2017/000000000382.jpg 136.14KB

yolov5_quant/coco128/images/train2017/000000000382.jpg 136.14KB

yolov5_quant/coco128/images/train2017/000000000384.jpg 133.17KB

yolov5_quant/coco128/images/train2017/000000000384.jpg 133.17KB

yolov5_quant/coco128/images/train2017/000000000387.jpg 162.15KB

yolov5_quant/coco128/images/train2017/000000000387.jpg 162.15KB

yolov5_quant/coco128/images/train2017/000000000389.jpg 260.39KB

yolov5_quant/coco128/images/train2017/000000000389.jpg 260.39KB

yolov5_quant/coco128/images/train2017/000000000394.jpg 199.31KB

yolov5_quant/coco128/images/train2017/000000000394.jpg 199.31KB

yolov5_quant/coco128/images/train2017/000000000395.jpg 240.75KB

yolov5_quant/coco128/images/train2017/000000000395.jpg 240.75KB

yolov5_quant/coco128/images/train2017/000000000397.jpg 313.08KB

yolov5_quant/coco128/images/train2017/000000000397.jpg 313.08KB

yolov5_quant/coco128/images/train2017/000000000400.jpg 239.83KB

yolov5_quant/coco128/images/train2017/000000000400.jpg 239.83KB

yolov5_quant/coco128/images/train2017/000000000404.jpg 172.02KB

yolov5_quant/coco128/images/train2017/000000000404.jpg 172.02KB

yolov5_quant/coco128/images/train2017/000000000415.jpg 115.38KB

yolov5_quant/coco128/images/train2017/000000000415.jpg 115.38KB

yolov5_quant/coco128/images/train2017/000000000419.jpg 149.24KB

yolov5_quant/coco128/images/train2017/000000000419.jpg 149.24KB

yolov5_quant/coco128/images/train2017/000000000428.jpg 99.97KB

yolov5_quant/coco128/images/train2017/000000000428.jpg 99.97KB

yolov5_quant/coco128/images/train2017/000000000431.jpg 145.48KB

yolov5_quant/coco128/images/train2017/000000000431.jpg 145.48KB

yolov5_quant/coco128/images/train2017/000000000436.jpg 134.69KB

yolov5_quant/coco128/images/train2017/000000000436.jpg 134.69KB

yolov5_quant/coco128/images/train2017/000000000438.jpg 214.89KB

yolov5_quant/coco128/images/train2017/000000000438.jpg 214.89KB

yolov5_quant/coco128/images/train2017/000000000443.jpg 95.38KB

yolov5_quant/coco128/images/train2017/000000000443.jpg 95.38KB

yolov5_quant/coco128/images/train2017/000000000446.jpg 96.12KB

yolov5_quant/coco128/images/train2017/000000000446.jpg 96.12KB

yolov5_quant/coco128/images/train2017/000000000450.jpg 207.86KB

yolov5_quant/coco128/images/train2017/000000000450.jpg 207.86KB

yolov5_quant/coco128/images/train2017/000000000459.jpg 190.33KB

yolov5_quant/coco128/images/train2017/000000000459.jpg 190.33KB

yolov5_quant/coco128/images/train2017/000000000471.jpg 73.59KB

yolov5_quant/coco128/images/train2017/000000000471.jpg 73.59KB

yolov5_quant/coco128/images/train2017/000000000472.jpg 49.42KB

yolov5_quant/coco128/images/train2017/000000000472.jpg 49.42KB

yolov5_quant/coco128/images/train2017/000000000474.jpg 128.4KB

yolov5_quant/coco128/images/train2017/000000000474.jpg 128.4KB

yolov5_quant/coco128/images/train2017/000000000486.jpg 212.36KB

yolov5_quant/coco128/images/train2017/000000000486.jpg 212.36KB

yolov5_quant/coco128/images/train2017/000000000488.jpg 103.1KB

yolov5_quant/coco128/images/train2017/000000000488.jpg 103.1KB

yolov5_quant/coco128/images/train2017/000000000490.jpg 131.51KB

yolov5_quant/coco128/images/train2017/000000000490.jpg 131.51KB

yolov5_quant/coco128/images/train2017/000000000491.jpg 93.78KB

yolov5_quant/coco128/images/train2017/000000000491.jpg 93.78KB

yolov5_quant/coco128/images/train2017/000000000502.jpg 286.77KB

yolov5_quant/coco128/images/train2017/000000000502.jpg 286.77KB

yolov5_quant/coco128/images/train2017/000000000508.jpg 144.55KB

yolov5_quant/coco128/images/train2017/000000000508.jpg 144.55KB

yolov5_quant/coco128/images/train2017/000000000510.jpg 164.57KB

yolov5_quant/coco128/images/train2017/000000000510.jpg 164.57KB

yolov5_quant/coco128/images/train2017/000000000514.jpg 124.03KB

yolov5_quant/coco128/images/train2017/000000000514.jpg 124.03KB

yolov5_quant/coco128/images/train2017/000000000520.jpg 102.55KB

yolov5_quant/coco128/images/train2017/000000000520.jpg 102.55KB

yolov5_quant/coco128/images/train2017/000000000529.jpg 223.18KB

yolov5_quant/coco128/images/train2017/000000000529.jpg 223.18KB

yolov5_quant/coco128/images/train2017/000000000531.jpg 92.65KB

yolov5_quant/coco128/images/train2017/000000000531.jpg 92.65KB

yolov5_quant/coco128/images/train2017/000000000532.jpg 209.05KB

yolov5_quant/coco128/images/train2017/000000000532.jpg 209.05KB

yolov5_quant/coco128/images/train2017/000000000536.jpg 22.08KB

yolov5_quant/coco128/images/train2017/000000000536.jpg 22.08KB

yolov5_quant/coco128/images/train2017/000000000540.jpg 252.32KB

yolov5_quant/coco128/images/train2017/000000000540.jpg 252.32KB

yolov5_quant/coco128/images/train2017/000000000542.jpg 128.23KB

yolov5_quant/coco128/images/train2017/000000000542.jpg 128.23KB

yolov5_quant/coco128/images/train2017/000000000544.jpg 183.66KB

yolov5_quant/coco128/images/train2017/000000000544.jpg 183.66KB

yolov5_quant/coco128/images/train2017/000000000560.jpg 80.09KB

yolov5_quant/coco128/images/train2017/000000000560.jpg 80.09KB

yolov5_quant/coco128/images/train2017/000000000562.jpg 44.69KB

yolov5_quant/coco128/images/train2017/000000000562.jpg 44.69KB

yolov5_quant/coco128/images/train2017/000000000564.jpg 127.45KB

yolov5_quant/coco128/images/train2017/000000000564.jpg 127.45KB

yolov5_quant/coco128/images/train2017/000000000569.jpg 126.65KB

yolov5_quant/coco128/images/train2017/000000000569.jpg 126.65KB

yolov5_quant/coco128/images/train2017/000000000572.jpg 86.23KB

yolov5_quant/coco128/images/train2017/000000000572.jpg 86.23KB

yolov5_quant/coco128/images/train2017/000000000575.jpg 529.87KB

yolov5_quant/coco128/images/train2017/000000000575.jpg 529.87KB

yolov5_quant/coco128/images/train2017/000000000581.jpg 184.39KB

yolov5_quant/coco128/images/train2017/000000000581.jpg 184.39KB

yolov5_quant/coco128/images/train2017/000000000584.jpg 150.74KB

yolov5_quant/coco128/images/train2017/000000000584.jpg 150.74KB

yolov5_quant/coco128/images/train2017/000000000589.jpg 94.53KB

yolov5_quant/coco128/images/train2017/000000000589.jpg 94.53KB

yolov5_quant/coco128/images/train2017/000000000590.jpg 71.21KB

yolov5_quant/coco128/images/train2017/000000000590.jpg 71.21KB

yolov5_quant/coco128/images/train2017/000000000595.jpg 175.97KB

yolov5_quant/coco128/images/train2017/000000000595.jpg 175.97KB

yolov5_quant/coco128/images/train2017/000000000597.jpg 170.24KB

yolov5_quant/coco128/images/train2017/000000000597.jpg 170.24KB

yolov5_quant/coco128/images/train2017/000000000599.jpg 240.3KB

yolov5_quant/coco128/images/train2017/000000000599.jpg 240.3KB

yolov5_quant/coco128/images/train2017/000000000605.jpg 311.07KB

yolov5_quant/coco128/images/train2017/000000000605.jpg 311.07KB

yolov5_quant/coco128/images/train2017/000000000612.jpg 169.67KB

yolov5_quant/coco128/images/train2017/000000000612.jpg 169.67KB

yolov5_quant/coco128/images/train2017/000000000620.jpg 125.48KB

yolov5_quant/coco128/images/train2017/000000000620.jpg 125.48KB

yolov5_quant/coco128/images/train2017/000000000623.jpg 109.82KB

yolov5_quant/coco128/images/train2017/000000000623.jpg 109.82KB

yolov5_quant/coco128/images/train2017/000000000625.jpg 167.18KB

yolov5_quant/coco128/images/train2017/000000000625.jpg 167.18KB

yolov5_quant/coco128/images/train2017/000000000626.jpg 153.87KB

yolov5_quant/coco128/images/train2017/000000000626.jpg 153.87KB

yolov5_quant/coco128/images/train2017/000000000629.jpg 286.1KB

yolov5_quant/coco128/images/train2017/000000000629.jpg 286.1KB

yolov5_quant/coco128/images/train2017/000000000634.jpg 106.97KB

yolov5_quant/coco128/images/train2017/000000000634.jpg 106.97KB

yolov5_quant/coco128/images/train2017/000000000636.jpg 171.33KB

yolov5_quant/coco128/images/train2017/000000000636.jpg 171.33KB

yolov5_quant/coco128/images/train2017/000000000641.jpg 203.95KB

yolov5_quant/coco128/images/train2017/000000000641.jpg 203.95KB

yolov5_quant/coco128/images/train2017/000000000643.jpg 39.59KB

yolov5_quant/coco128/images/train2017/000000000643.jpg 39.59KB

yolov5_quant/coco128/images/train2017/000000000650.jpg 115.91KB

yolov5_quant/coco128/images/train2017/000000000650.jpg 115.91KB

yolov5_quant/coco128/labels/

yolov5_quant/coco128/labels/ yolov5_quant/coco128/labels/train2017/

yolov5_quant/coco128/labels/train2017/ yolov5_quant/coco128/labels/train2017/.DS_Store 6KB

yolov5_quant/coco128/labels/train2017/.DS_Store 6KB

yolov5_quant/coco128/labels/train2017/000000000009.txt 308B

yolov5_quant/coco128/labels/train2017/000000000009.txt 308B

yolov5_quant/coco128/labels/train2017/000000000025.txt 78B

yolov5_quant/coco128/labels/train2017/000000000025.txt 78B

yolov5_quant/coco128/labels/train2017/000000000030.txt 72B

yolov5_quant/coco128/labels/train2017/000000000030.txt 72B

yolov5_quant/coco128/labels/train2017/000000000034.txt 39B

yolov5_quant/coco128/labels/train2017/000000000034.txt 39B

yolov5_quant/coco128/labels/train2017/000000000036.txt 77B

yolov5_quant/coco128/labels/train2017/000000000036.txt 77B

yolov5_quant/coco128/labels/train2017/000000000042.txt 35B

yolov5_quant/coco128/labels/train2017/000000000042.txt 35B

yolov5_quant/coco128/labels/train2017/000000000049.txt 324B

yolov5_quant/coco128/labels/train2017/000000000049.txt 324B

yolov5_quant/coco128/labels/train2017/000000000061.txt 190B

yolov5_quant/coco128/labels/train2017/000000000061.txt 190B

yolov5_quant/coco128/labels/train2017/000000000064.txt 154B

yolov5_quant/coco128/labels/train2017/000000000064.txt 154B

yolov5_quant/coco128/labels/train2017/000000000071.txt 604B

yolov5_quant/coco128/labels/train2017/000000000071.txt 604B

yolov5_quant/coco128/labels/train2017/000000000072.txt 78B

yolov5_quant/coco128/labels/train2017/000000000072.txt 78B

yolov5_quant/coco128/labels/train2017/000000000073.txt 76B

yolov5_quant/coco128/labels/train2017/000000000073.txt 76B

yolov5_quant/coco128/labels/train2017/000000000074.txt 303B

yolov5_quant/coco128/labels/train2017/000000000074.txt 303B

yolov5_quant/coco128/labels/train2017/000000000077.txt 283B

yolov5_quant/coco128/labels/train2017/000000000077.txt 283B

yolov5_quant/coco128/labels/train2017/000000000078.txt 39B

yolov5_quant/coco128/labels/train2017/000000000078.txt 39B

yolov5_quant/coco128/labels/train2017/000000000081.txt 38B

yolov5_quant/coco128/labels/train2017/000000000081.txt 38B

yolov5_quant/coco128/labels/train2017/000000000086.txt 111B

yolov5_quant/coco128/labels/train2017/000000000086.txt 111B

yolov5_quant/coco128/labels/train2017/000000000089.txt 386B

yolov5_quant/coco128/labels/train2017/000000000089.txt 386B

yolov5_quant/coco128/labels/train2017/000000000092.txt 78B

yolov5_quant/coco128/labels/train2017/000000000092.txt 78B

yolov5_quant/coco128/labels/train2017/000000000094.txt 75B

yolov5_quant/coco128/labels/train2017/000000000094.txt 75B

yolov5_quant/coco128/labels/train2017/000000000109.txt 304B

yolov5_quant/coco128/labels/train2017/000000000109.txt 304B

yolov5_quant/coco128/labels/train2017/000000000110.txt 914B

yolov5_quant/coco128/labels/train2017/000000000110.txt 914B

yolov5_quant/coco128/labels/train2017/000000000113.txt 692B

yolov5_quant/coco128/labels/train2017/000000000113.txt 692B

yolov5_quant/coco128/labels/train2017/000000000127.txt 658B

yolov5_quant/coco128/labels/train2017/000000000127.txt 658B

yolov5_quant/coco128/labels/train2017/000000000133.txt 77B

yolov5_quant/coco128/labels/train2017/000000000133.txt 77B

yolov5_quant/coco128/labels/train2017/000000000136.txt 145B

yolov5_quant/coco128/labels/train2017/000000000136.txt 145B

yolov5_quant/coco128/labels/train2017/000000000138.txt 267B

yolov5_quant/coco128/labels/train2017/000000000138.txt 267B

yolov5_quant/coco128/labels/train2017/000000000142.txt 140B

yolov5_quant/coco128/labels/train2017/000000000142.txt 140B

yolov5_quant/coco128/labels/train2017/000000000143.txt 287B

yolov5_quant/coco128/labels/train2017/000000000143.txt 287B

yolov5_quant/coco128/labels/train2017/000000000144.txt 116B

yolov5_quant/coco128/labels/train2017/000000000144.txt 116B

yolov5_quant/coco128/labels/train2017/000000000149.txt 833B

yolov5_quant/coco128/labels/train2017/000000000149.txt 833B

yolov5_quant/coco128/labels/train2017/000000000151.txt 114B

yolov5_quant/coco128/labels/train2017/000000000151.txt 114B

yolov5_quant/coco128/labels/train2017/000000000154.txt 117B

yolov5_quant/coco128/labels/train2017/000000000154.txt 117B

yolov5_quant/coco128/labels/train2017/000000000164.txt 1.49KB

yolov5_quant/coco128/labels/train2017/000000000164.txt 1.49KB

yolov5_quant/coco128/labels/train2017/000000000165.txt 154B

yolov5_quant/coco128/labels/train2017/000000000165.txt 154B

yolov5_quant/coco128/labels/train2017/000000000192.txt 186B

yolov5_quant/coco128/labels/train2017/000000000192.txt 186B

yolov5_quant/coco128/labels/train2017/000000000194.txt 78B

yolov5_quant/coco128/labels/train2017/000000000194.txt 78B

yolov5_quant/coco128/labels/train2017/000000000196.txt 1.56KB

yolov5_quant/coco128/labels/train2017/000000000196.txt 1.56KB

yolov5_quant/coco128/labels/train2017/000000000201.txt 306B

yolov5_quant/coco128/labels/train2017/000000000201.txt 306B

yolov5_quant/coco128/labels/train2017/000000000208.txt 153B

yolov5_quant/coco128/labels/train2017/000000000208.txt 153B

yolov5_quant/coco128/labels/train2017/000000000241.txt 537B

yolov5_quant/coco128/labels/train2017/000000000241.txt 537B

yolov5_quant/coco128/labels/train2017/000000000247.txt 302B

yolov5_quant/coco128/labels/train2017/000000000247.txt 302B

yolov5_quant/coco128/labels/train2017/000000000250.txt

yolov5_quant/coco128/labels/train2017/000000000250.txt yolov5_quant/coco128/labels/train2017/000000000257.txt 1.21KB

yolov5_quant/coco128/labels/train2017/000000000257.txt 1.21KB

yolov5_quant/coco128/labels/train2017/000000000260.txt 246B

yolov5_quant/coco128/labels/train2017/000000000260.txt 246B

yolov5_quant/coco128/labels/train2017/000000000263.txt 78B

yolov5_quant/coco128/labels/train2017/000000000263.txt 78B

yolov5_quant/coco128/labels/train2017/000000000283.txt 195B

yolov5_quant/coco128/labels/train2017/000000000283.txt 195B

yolov5_quant/coco128/labels/train2017/000000000294.txt 772B

yolov5_quant/coco128/labels/train2017/000000000294.txt 772B

yolov5_quant/coco128/labels/train2017/000000000307.txt 114B

yolov5_quant/coco128/labels/train2017/000000000307.txt 114B

yolov5_quant/coco128/labels/train2017/000000000308.txt 497B

yolov5_quant/coco128/labels/train2017/000000000308.txt 497B

yolov5_quant/coco128/labels/train2017/000000000309.txt 154B

yolov5_quant/coco128/labels/train2017/000000000309.txt 154B

yolov5_quant/coco128/labels/train2017/000000000312.txt 231B

yolov5_quant/coco128/labels/train2017/000000000312.txt 231B

yolov5_quant/coco128/labels/train2017/000000000315.txt 1.42KB

yolov5_quant/coco128/labels/train2017/000000000315.txt 1.42KB

yolov5_quant/coco128/labels/train2017/000000000321.txt 114B

yolov5_quant/coco128/labels/train2017/000000000321.txt 114B

yolov5_quant/coco128/labels/train2017/000000000322.txt 77B

yolov5_quant/coco128/labels/train2017/000000000322.txt 77B

yolov5_quant/coco128/labels/train2017/000000000326.txt 77B

yolov5_quant/coco128/labels/train2017/000000000326.txt 77B

yolov5_quant/coco128/labels/train2017/000000000328.txt 424B

yolov5_quant/coco128/labels/train2017/000000000328.txt 424B

yolov5_quant/coco128/labels/train2017/000000000332.txt 270B

yolov5_quant/coco128/labels/train2017/000000000332.txt 270B

yolov5_quant/coco128/labels/train2017/000000000338.txt 226B

yolov5_quant/coco128/labels/train2017/000000000338.txt 226B

yolov5_quant/coco128/labels/train2017/000000000349.txt 151B

yolov5_quant/coco128/labels/train2017/000000000349.txt 151B

yolov5_quant/coco128/labels/train2017/000000000357.txt 634B

yolov5_quant/coco128/labels/train2017/000000000357.txt 634B

yolov5_quant/coco128/labels/train2017/000000000359.txt 140B

yolov5_quant/coco128/labels/train2017/000000000359.txt 140B

yolov5_quant/coco128/labels/train2017/000000000360.txt 105B

yolov5_quant/coco128/labels/train2017/000000000360.txt 105B

yolov5_quant/coco128/labels/train2017/000000000368.txt 486B

yolov5_quant/coco128/labels/train2017/000000000368.txt 486B

yolov5_quant/coco128/labels/train2017/000000000370.txt 77B

yolov5_quant/coco128/labels/train2017/000000000370.txt 77B

yolov5_quant/coco128/labels/train2017/000000000382.txt 115B

yolov5_quant/coco128/labels/train2017/000000000382.txt 115B

yolov5_quant/coco128/labels/train2017/000000000384.txt 426B

yolov5_quant/coco128/labels/train2017/000000000384.txt 426B

yolov5_quant/coco128/labels/train2017/000000000387.txt 116B

yolov5_quant/coco128/labels/train2017/000000000387.txt 116B

yolov5_quant/coco128/labels/train2017/000000000389.txt 526B

yolov5_quant/coco128/labels/train2017/000000000389.txt 526B

yolov5_quant/coco128/labels/train2017/000000000394.txt 78B

yolov5_quant/coco128/labels/train2017/000000000394.txt 78B

yolov5_quant/coco128/labels/train2017/000000000395.txt 490B

yolov5_quant/coco128/labels/train2017/000000000395.txt 490B

yolov5_quant/coco128/labels/train2017/000000000397.txt 271B

yolov5_quant/coco128/labels/train2017/000000000397.txt 271B

yolov5_quant/coco128/labels/train2017/000000000400.txt 77B

yolov5_quant/coco128/labels/train2017/000000000400.txt 77B

yolov5_quant/coco128/labels/train2017/000000000404.txt 190B

yolov5_quant/coco128/labels/train2017/000000000404.txt 190B

yolov5_quant/coco128/labels/train2017/000000000415.txt 77B

yolov5_quant/coco128/labels/train2017/000000000415.txt 77B

yolov5_quant/coco128/labels/train2017/000000000419.txt 267B

yolov5_quant/coco128/labels/train2017/000000000419.txt 267B

yolov5_quant/coco128/labels/train2017/000000000428.txt 116B

yolov5_quant/coco128/labels/train2017/000000000428.txt 116B

yolov5_quant/coco128/labels/train2017/000000000431.txt 116B

yolov5_quant/coco128/labels/train2017/000000000431.txt 116B

yolov5_quant/coco128/labels/train2017/000000000436.txt 77B

yolov5_quant/coco128/labels/train2017/000000000436.txt 77B

yolov5_quant/coco128/labels/train2017/000000000438.txt 497B

yolov5_quant/coco128/labels/train2017/000000000438.txt 497B

yolov5_quant/coco128/labels/train2017/000000000443.txt 231B

yolov5_quant/coco128/labels/train2017/000000000443.txt 231B

yolov5_quant/coco128/labels/train2017/000000000446.txt 501B

yolov5_quant/coco128/labels/train2017/000000000446.txt 501B

yolov5_quant/coco128/labels/train2017/000000000450.txt 142B

yolov5_quant/coco128/labels/train2017/000000000450.txt 142B

yolov5_quant/coco128/labels/train2017/000000000459.txt 77B

yolov5_quant/coco128/labels/train2017/000000000459.txt 77B

yolov5_quant/coco128/labels/train2017/000000000471.txt 75B

yolov5_quant/coco128/labels/train2017/000000000471.txt 75B

yolov5_quant/coco128/labels/train2017/000000000472.txt 38B

yolov5_quant/coco128/labels/train2017/000000000472.txt 38B

yolov5_quant/coco128/labels/train2017/000000000474.txt 73B

yolov5_quant/coco128/labels/train2017/000000000474.txt 73B

yolov5_quant/coco128/labels/train2017/000000000486.txt 304B

yolov5_quant/coco128/labels/train2017/000000000486.txt 304B

yolov5_quant/coco128/labels/train2017/000000000488.txt 374B

yolov5_quant/coco128/labels/train2017/000000000488.txt 374B

yolov5_quant/coco128/labels/train2017/000000000490.txt 69B

yolov5_quant/coco128/labels/train2017/000000000490.txt 69B

yolov5_quant/coco128/labels/train2017/000000000491.txt 147B

yolov5_quant/coco128/labels/train2017/000000000491.txt 147B

yolov5_quant/coco128/labels/train2017/000000000502.txt 39B

yolov5_quant/coco128/labels/train2017/000000000502.txt 39B

yolov5_quant/coco128/labels/train2017/000000000508.txt

yolov5_quant/coco128/labels/train2017/000000000508.txt yolov5_quant/coco128/labels/train2017/000000000510.txt 112B

yolov5_quant/coco128/labels/train2017/000000000510.txt 112B

yolov5_quant/coco128/labels/train2017/000000000514.txt 39B

yolov5_quant/coco128/labels/train2017/000000000514.txt 39B

yolov5_quant/coco128/labels/train2017/000000000520.txt 421B

yolov5_quant/coco128/labels/train2017/000000000520.txt 421B

yolov5_quant/coco128/labels/train2017/000000000529.txt 112B

yolov5_quant/coco128/labels/train2017/000000000529.txt 112B

yolov5_quant/coco128/labels/train2017/000000000531.txt 569B

yolov5_quant/coco128/labels/train2017/000000000531.txt 569B

yolov5_quant/coco128/labels/train2017/000000000532.txt 305B

yolov5_quant/coco128/labels/train2017/000000000532.txt 305B

yolov5_quant/coco128/labels/train2017/000000000536.txt 425B

yolov5_quant/coco128/labels/train2017/000000000536.txt 425B

yolov5_quant/coco128/labels/train2017/000000000540.txt 744B

yolov5_quant/coco128/labels/train2017/000000000540.txt 744B

yolov5_quant/coco128/labels/train2017/000000000542.txt 637B

yolov5_quant/coco128/labels/train2017/000000000542.txt 637B

yolov5_quant/coco128/labels/train2017/000000000544.txt 601B

yolov5_quant/coco128/labels/train2017/000000000544.txt 601B

yolov5_quant/coco128/labels/train2017/000000000560.txt 194B

yolov5_quant/coco128/labels/train2017/000000000560.txt 194B

yolov5_quant/coco128/labels/train2017/000000000562.txt 155B

yolov5_quant/coco128/labels/train2017/000000000562.txt 155B

yolov5_quant/coco128/labels/train2017/000000000564.txt 616B

yolov5_quant/coco128/labels/train2017/000000000564.txt 616B

yolov5_quant/coco128/labels/train2017/000000000569.txt 194B

yolov5_quant/coco128/labels/train2017/000000000569.txt 194B

yolov5_quant/coco128/labels/train2017/000000000572.txt 114B

yolov5_quant/coco128/labels/train2017/000000000572.txt 114B

yolov5_quant/coco128/labels/train2017/000000000575.txt 39B

yolov5_quant/coco128/labels/train2017/000000000575.txt 39B

yolov5_quant/coco128/labels/train2017/000000000581.txt 36B

yolov5_quant/coco128/labels/train2017/000000000581.txt 36B

yolov5_quant/coco128/labels/train2017/000000000584.txt 524B

yolov5_quant/coco128/labels/train2017/000000000584.txt 524B

yolov5_quant/coco128/labels/train2017/000000000589.txt 73B

yolov5_quant/coco128/labels/train2017/000000000589.txt 73B

yolov5_quant/coco128/labels/train2017/000000000590.txt 73B

yolov5_quant/coco128/labels/train2017/000000000590.txt 73B

yolov5_quant/coco128/labels/train2017/000000000595.txt 39B

yolov5_quant/coco128/labels/train2017/000000000595.txt 39B

yolov5_quant/coco128/labels/train2017/000000000597.txt 268B

yolov5_quant/coco128/labels/train2017/000000000597.txt 268B

yolov5_quant/coco128/labels/train2017/000000000599.txt 192B

yolov5_quant/coco128/labels/train2017/000000000599.txt 192B

yolov5_quant/coco128/labels/train2017/000000000605.txt 142B

yolov5_quant/coco128/labels/train2017/000000000605.txt 142B

yolov5_quant/coco128/labels/train2017/000000000612.txt 417B

yolov5_quant/coco128/labels/train2017/000000000612.txt 417B

yolov5_quant/coco128/labels/train2017/000000000620.txt 78B

yolov5_quant/coco128/labels/train2017/000000000620.txt 78B

yolov5_quant/coco128/labels/train2017/000000000623.txt 141B

yolov5_quant/coco128/labels/train2017/000000000623.txt 141B

yolov5_quant/coco128/labels/train2017/000000000625.txt 148B

yolov5_quant/coco128/labels/train2017/000000000625.txt 148B

yolov5_quant/coco128/labels/train2017/000000000626.txt 78B

yolov5_quant/coco128/labels/train2017/000000000626.txt 78B

yolov5_quant/coco128/labels/train2017/000000000629.txt 38B

yolov5_quant/coco128/labels/train2017/000000000629.txt 38B

yolov5_quant/coco128/labels/train2017/000000000634.txt 225B

yolov5_quant/coco128/labels/train2017/000000000634.txt 225B

yolov5_quant/coco128/labels/train2017/000000000636.txt 39B

yolov5_quant/coco128/labels/train2017/000000000636.txt 39B

yolov5_quant/coco128/labels/train2017/000000000641.txt 454B

yolov5_quant/coco128/labels/train2017/000000000641.txt 454B

yolov5_quant/coco128/labels/train2017/000000000643.txt 717B

yolov5_quant/coco128/labels/train2017/000000000643.txt 717B

yolov5_quant/coco128/labels/train2017/000000000650.txt 77B

yolov5_quant/coco128/labels/train2017/000000000650.txt 77B

yolov5_quant/coco128/labels/train2017.cache 44.17KB

yolov5_quant/coco128/labels/train2017.cache 44.17KB

yolov5_quant/coco128/labels/train2017.cache.npy 43.8KB

yolov5_quant/coco128/labels/train2017.cache.npy 43.8KB

yolov5_quant/data/

yolov5_quant/data/ yolov5_quant/data/Argoverse.yaml 2.71KB

yolov5_quant/data/Argoverse.yaml 2.71KB

yolov5_quant/data/GlobalWheat2020.yaml 1.88KB

yolov5_quant/data/GlobalWheat2020.yaml 1.88KB

yolov5_quant/data/ImageNet.yaml 15.72KB

yolov5_quant/data/ImageNet.yaml 15.72KB

yolov5_quant/data/Objects365.yaml 8.01KB

yolov5_quant/data/Objects365.yaml 8.01KB

yolov5_quant/data/SKU-110K.yaml 2.33KB

yolov5_quant/data/SKU-110K.yaml 2.33KB

yolov5_quant/data/VOC.yaml 3.38KB

yolov5_quant/data/VOC.yaml 3.38KB

yolov5_quant/data/VisDrone.yaml 2.91KB

yolov5_quant/data/VisDrone.yaml 2.91KB

yolov5_quant/data/coco.yaml 2.32KB

yolov5_quant/data/coco.yaml 2.32KB

yolov5_quant/data/coco128.yaml 1.39KB

yolov5_quant/data/coco128.yaml 1.39KB

yolov5_quant/data/hyps/

yolov5_quant/data/hyps/ yolov5_quant/data/hyps/hyp.Objects365.yaml 673B

yolov5_quant/data/hyps/hyp.Objects365.yaml 673B

yolov5_quant/data/hyps/hyp.VOC.yaml 1.13KB

yolov5_quant/data/hyps/hyp.VOC.yaml 1.13KB

yolov5_quant/data/hyps/hyp.scratch-high.yaml 1.64KB

yolov5_quant/data/hyps/hyp.scratch-high.yaml 1.64KB

yolov5_quant/data/hyps/hyp.scratch-low.yaml 1.65KB

yolov5_quant/data/hyps/hyp.scratch-low.yaml 1.65KB

yolov5_quant/data/hyps/hyp.scratch-med.yaml 1.65KB

yolov5_quant/data/hyps/hyp.scratch-med.yaml 1.65KB

yolov5_quant/data/images/

yolov5_quant/data/images/ yolov5_quant/data/images/bus.jpg 476.01KB

yolov5_quant/data/images/bus.jpg 476.01KB

yolov5_quant/data/images/zidane.jpg 164.99KB

yolov5_quant/data/images/zidane.jpg 164.99KB

yolov5_quant/data/scripts/

yolov5_quant/data/scripts/ yolov5_quant/data/scripts/download_weights.sh 590B

yolov5_quant/data/scripts/download_weights.sh 590B

yolov5_quant/data/scripts/get_coco.sh 1.53KB

yolov5_quant/data/scripts/get_coco.sh 1.53KB

yolov5_quant/data/scripts/get_coco128.sh 618B

yolov5_quant/data/scripts/get_coco128.sh 618B

yolov5_quant/data/scripts/get_imagenet.sh 1.63KB

yolov5_quant/data/scripts/get_imagenet.sh 1.63KB

yolov5_quant/data/xView.yaml 4.99KB

yolov5_quant/data/xView.yaml 4.99KB

yolov5_quant/detect.py 13.33KB

yolov5_quant/detect.py 13.33KB

yolov5_quant/export.py 29.87KB

yolov5_quant/export.py 29.87KB

yolov5_quant/hubconf.py 6.68KB

yolov5_quant/hubconf.py 6.68KB

yolov5_quant/models/

yolov5_quant/models/ yolov5_quant/models/__init__.py

yolov5_quant/models/__init__.py yolov5_quant/models/__pycache__/

yolov5_quant/models/__pycache__/ yolov5_quant/models/__pycache__/__init__.cpython-38.pyc 130B

yolov5_quant/models/__pycache__/__init__.cpython-38.pyc 130B

yolov5_quant/models/__pycache__/common.cpython-38.pyc 32.4KB

yolov5_quant/models/__pycache__/common.cpython-38.pyc 32.4KB

yolov5_quant/models/__pycache__/experimental.cpython-38.pyc 4.69KB

yolov5_quant/models/__pycache__/experimental.cpython-38.pyc 4.69KB

yolov5_quant/models/__pycache__/yolo.cpython-38.pyc 13.79KB

yolov5_quant/models/__pycache__/yolo.cpython-38.pyc 13.79KB

yolov5_quant/models/common.py 36.37KB

yolov5_quant/models/common.py 36.37KB

yolov5_quant/models/experimental.py 4.1KB

yolov5_quant/models/experimental.py 4.1KB

yolov5_quant/models/hub/

yolov5_quant/models/hub/ yolov5_quant/models/hub/anchors.yaml 3.26KB

yolov5_quant/models/hub/anchors.yaml 3.26KB

yolov5_quant/models/hub/yolov3-spp.yaml 1.53KB

yolov5_quant/models/hub/yolov3-spp.yaml 1.53KB

yolov5_quant/models/hub/yolov3-tiny.yaml 1.2KB

yolov5_quant/models/hub/yolov3-tiny.yaml 1.2KB

yolov5_quant/models/hub/yolov3.yaml 1.52KB

yolov5_quant/models/hub/yolov3.yaml 1.52KB

yolov5_quant/models/hub/yolov5-bifpn.yaml 1.39KB

yolov5_quant/models/hub/yolov5-bifpn.yaml 1.39KB

yolov5_quant/models/hub/yolov5-fpn.yaml 1.19KB

yolov5_quant/models/hub/yolov5-fpn.yaml 1.19KB

yolov5_quant/models/hub/yolov5-p2.yaml 1.65KB

yolov5_quant/models/hub/yolov5-p2.yaml 1.65KB

yolov5_quant/models/hub/yolov5-p34.yaml 1.32KB

yolov5_quant/models/hub/yolov5-p34.yaml 1.32KB

yolov5_quant/models/hub/yolov5-p6.yaml 1.7KB

yolov5_quant/models/hub/yolov5-p6.yaml 1.7KB

yolov5_quant/models/hub/yolov5-p7.yaml 2.07KB

yolov5_quant/models/hub/yolov5-p7.yaml 2.07KB

yolov5_quant/models/hub/yolov5-panet.yaml 1.37KB

yolov5_quant/models/hub/yolov5-panet.yaml 1.37KB

yolov5_quant/models/hub/yolov5l6.yaml 1.78KB

yolov5_quant/models/hub/yolov5l6.yaml 1.78KB

yolov5_quant/models/hub/yolov5m6.yaml 1.78KB

yolov5_quant/models/hub/yolov5m6.yaml 1.78KB

yolov5_quant/models/hub/yolov5n6.yaml 1.78KB

yolov5_quant/models/hub/yolov5n6.yaml 1.78KB

yolov5_quant/models/hub/yolov5s-ghost.yaml 1.45KB

yolov5_quant/models/hub/yolov5s-ghost.yaml 1.45KB

yolov5_quant/models/hub/yolov5s-transformer.yaml 1.41KB

yolov5_quant/models/hub/yolov5s-transformer.yaml 1.41KB

yolov5_quant/models/hub/yolov5s6.yaml 1.78KB

yolov5_quant/models/hub/yolov5s6.yaml 1.78KB

yolov5_quant/models/hub/yolov5x6.yaml 1.78KB

yolov5_quant/models/hub/yolov5x6.yaml 1.78KB

yolov5_quant/models/tf.py 24.9KB

yolov5_quant/models/tf.py 24.9KB

yolov5_quant/models/yolo.py 15.93KB

yolov5_quant/models/yolo.py 15.93KB

yolov5_quant/models/yolov5l.yaml 1.37KB

yolov5_quant/models/yolov5l.yaml 1.37KB

yolov5_quant/models/yolov5m.yaml 1.37KB

yolov5_quant/models/yolov5m.yaml 1.37KB

yolov5_quant/models/yolov5n.yaml 1.37KB

yolov5_quant/models/yolov5n.yaml 1.37KB

yolov5_quant/models/yolov5s.yaml 1.37KB

yolov5_quant/models/yolov5s.yaml 1.37KB

yolov5_quant/models/yolov5x.yaml 1.37KB

yolov5_quant/models/yolov5x.yaml 1.37KB

yolov5_quant/py_quant_utils.py 5.22KB

yolov5_quant/py_quant_utils.py 5.22KB

yolov5_quant/quant_flow_ptq_int8.py 12.68KB

yolov5_quant/quant_flow_ptq_int8.py 12.68KB

yolov5_quant/quant_flow_ptq_sensitive_int8.py 12.92KB

yolov5_quant/quant_flow_ptq_sensitive_int8.py 12.92KB

yolov5_quant/quant_flow_qat_int8.py 15.71KB

yolov5_quant/quant_flow_qat_int8.py 15.71KB

yolov5_quant/quant_flow_qat_int8_ls.py 23.2KB

yolov5_quant/quant_flow_qat_int8_ls.py 23.2KB

yolov5_quant/requirements.txt 1.19KB

yolov5_quant/requirements.txt 1.19KB

yolov5_quant/setup.cfg 1.69KB

yolov5_quant/setup.cfg 1.69KB

yolov5_quant/summary_sensitive_analysis.json 368B

yolov5_quant/summary_sensitive_analysis.json 368B

yolov5_quant/train.py 32.51KB

yolov5_quant/train.py 32.51KB

yolov5_quant/tutorial.ipynb 57.32KB

yolov5_quant/tutorial.ipynb 57.32KB

yolov5_quant/utils/

yolov5_quant/utils/ yolov5_quant/utils/__init__.py 1.07KB

yolov5_quant/utils/__init__.py 1.07KB

yolov5_quant/utils/__pycache__/

yolov5_quant/utils/__pycache__/ yolov5_quant/utils/__pycache__/__init__.cpython-38.pyc 1015B

yolov5_quant/utils/__pycache__/__init__.cpython-38.pyc 1015B

yolov5_quant/utils/__pycache__/augmentations.cpython-38.pyc 10.8KB

yolov5_quant/utils/__pycache__/augmentations.cpython-38.pyc 10.8KB

yolov5_quant/utils/__pycache__/autoanchor.cpython-38.pyc 6.3KB

yolov5_quant/utils/__pycache__/autoanchor.cpython-38.pyc 6.3KB

yolov5_quant/utils/__pycache__/callbacks.cpython-38.pyc 2.35KB

yolov5_quant/utils/__pycache__/callbacks.cpython-38.pyc 2.35KB

yolov5_quant/utils/__pycache__/dataloaders.cpython-38.pyc 38.69KB

yolov5_quant/utils/__pycache__/dataloaders.cpython-38.pyc 38.69KB

yolov5_quant/utils/__pycache__/downloads.cpython-38.pyc 4.91KB

yolov5_quant/utils/__pycache__/downloads.cpython-38.pyc 4.91KB

yolov5_quant/utils/__pycache__/general.cpython-38.pyc 36.58KB

yolov5_quant/utils/__pycache__/general.cpython-38.pyc 36.58KB

yolov5_quant/utils/__pycache__/metrics.cpython-38.pyc 11.26KB

yolov5_quant/utils/__pycache__/metrics.cpython-38.pyc 11.26KB

yolov5_quant/utils/__pycache__/plots.cpython-38.pyc 19.44KB

yolov5_quant/utils/__pycache__/plots.cpython-38.pyc 19.44KB

yolov5_quant/utils/__pycache__/torch_utils.cpython-38.pyc 16.36KB

yolov5_quant/utils/__pycache__/torch_utils.cpython-38.pyc 16.36KB

yolov5_quant/utils/activations.py 3.37KB

yolov5_quant/utils/activations.py 3.37KB

yolov5_quant/utils/augmentations.py 14.33KB

yolov5_quant/utils/augmentations.py 14.33KB

yolov5_quant/utils/autoanchor.py 7.24KB

yolov5_quant/utils/autoanchor.py 7.24KB

yolov5_quant/utils/autobatch.py 2.52KB

yolov5_quant/utils/autobatch.py 2.52KB

yolov5_quant/utils/aws/

yolov5_quant/utils/aws/ yolov5_quant/utils/aws/__init__.py

yolov5_quant/utils/aws/__init__.py yolov5_quant/utils/aws/mime.sh 780B

yolov5_quant/utils/aws/mime.sh 780B

yolov5_quant/utils/aws/resume.py 1.17KB

yolov5_quant/utils/aws/resume.py 1.17KB

yolov5_quant/utils/aws/userdata.sh 1.22KB

yolov5_quant/utils/aws/userdata.sh 1.22KB

yolov5_quant/utils/benchmarks.py 6.79KB

yolov5_quant/utils/benchmarks.py 6.79KB

yolov5_quant/utils/callbacks.py 2.35KB

yolov5_quant/utils/callbacks.py 2.35KB

yolov5_quant/utils/dataloaders.py 49.88KB

yolov5_quant/utils/dataloaders.py 49.88KB

yolov5_quant/utils/docker/

yolov5_quant/utils/docker/ yolov5_quant/utils/docker/Dockerfile 2.41KB

yolov5_quant/utils/docker/Dockerfile 2.41KB

yolov5_quant/utils/docker/Dockerfile-arm64 1.64KB

yolov5_quant/utils/docker/Dockerfile-arm64 1.64KB

yolov5_quant/utils/docker/Dockerfile-cpu 1.62KB

yolov5_quant/utils/docker/Dockerfile-cpu 1.62KB

yolov5_quant/utils/downloads.py 7.15KB

yolov5_quant/utils/downloads.py 7.15KB

yolov5_quant/utils/flask_rest_api/

yolov5_quant/utils/flask_rest_api/ yolov5_quant/utils/flask_rest_api/README.md 1.67KB

yolov5_quant/utils/flask_rest_api/README.md 1.67KB

yolov5_quant/utils/flask_rest_api/example_request.py 368B

yolov5_quant/utils/flask_rest_api/example_request.py 368B

yolov5_quant/utils/flask_rest_api/restapi.py 1.41KB

yolov5_quant/utils/flask_rest_api/restapi.py 1.41KB

yolov5_quant/utils/general.py 42.46KB

yolov5_quant/utils/general.py 42.46KB

yolov5_quant/utils/google_app_engine/

yolov5_quant/utils/google_app_engine/ yolov5_quant/utils/google_app_engine/Dockerfile 821B

yolov5_quant/utils/google_app_engine/Dockerfile 821B

yolov5_quant/utils/google_app_engine/additional_requirements.txt 105B

yolov5_quant/utils/google_app_engine/additional_requirements.txt 105B

yolov5_quant/utils/google_app_engine/app.yaml 174B

yolov5_quant/utils/google_app_engine/app.yaml 174B

yolov5_quant/utils/loggers/

yolov5_quant/utils/loggers/ yolov5_quant/utils/loggers/__init__.py 12.95KB

yolov5_quant/utils/loggers/__init__.py 12.95KB

yolov5_quant/utils/loggers/clearml/

yolov5_quant/utils/loggers/clearml/ yolov5_quant/utils/loggers/clearml/README.md 10.26KB

yolov5_quant/utils/loggers/clearml/README.md 10.26KB

yolov5_quant/utils/loggers/clearml/__init__.py

yolov5_quant/utils/loggers/clearml/__init__.py yolov5_quant/utils/loggers/clearml/clearml_utils.py 7.28KB

yolov5_quant/utils/loggers/clearml/clearml_utils.py 7.28KB

yolov5_quant/utils/loggers/clearml/hpo.py 5.14KB

yolov5_quant/utils/loggers/clearml/hpo.py 5.14KB

yolov5_quant/utils/loggers/wandb/

yolov5_quant/utils/loggers/wandb/ yolov5_quant/utils/loggers/wandb/README.md 10.55KB

yolov5_quant/utils/loggers/wandb/README.md 10.55KB

yolov5_quant/utils/loggers/wandb/__init__.py

yolov5_quant/utils/loggers/wandb/__init__.py yolov5_quant/utils/loggers/wandb/log_dataset.py 1.01KB

yolov5_quant/utils/loggers/wandb/log_dataset.py 1.01KB

yolov5_quant/utils/loggers/wandb/sweep.py 1.18KB

yolov5_quant/utils/loggers/wandb/sweep.py 1.18KB

yolov5_quant/utils/loggers/wandb/sweep.yaml 2.41KB

yolov5_quant/utils/loggers/wandb/sweep.yaml 2.41KB

yolov5_quant/utils/loggers/wandb/wandb_utils.py 27.38KB

yolov5_quant/utils/loggers/wandb/wandb_utils.py 27.38KB

yolov5_quant/utils/loss.py 9.69KB

yolov5_quant/utils/loss.py 9.69KB

yolov5_quant/utils/metrics.py 14.38KB

yolov5_quant/utils/metrics.py 14.38KB

yolov5_quant/utils/plots.py 21.92KB

yolov5_quant/utils/plots.py 21.92KB

yolov5_quant/utils/torch_utils.py 19.11KB

yolov5_quant/utils/torch_utils.py 19.11KB

yolov5_quant/val.py 19.22KB

yolov5_quant/val.py 19.22KB

yolov5_quant/venv/

yolov5_quant/venv/ yolov5_quant/venv/.gitignore 42B

yolov5_quant/venv/.gitignore 42B

yolov5_quant/venv/Lib/

yolov5_quant/venv/Lib/ yolov5_quant/venv/Lib/site-packages/

yolov5_quant/venv/Lib/site-packages/ yolov5_quant/venv/Lib/site-packages/__pycache__/

yolov5_quant/venv/Lib/site-packages/__pycache__/ yolov5_quant/venv/Lib/site-packages/__pycache__/_virtualenv.cpython-38.pyc 3.85KB

yolov5_quant/venv/Lib/site-packages/__pycache__/_virtualenv.cpython-38.pyc 3.85KB

yolov5_quant/venv/Lib/site-packages/_distutils_hack/

yolov5_quant/venv/Lib/site-packages/_distutils_hack/ yolov5_quant/venv/Lib/site-packages/_distutils_hack/__init__.py 5.98KB

yolov5_quant/venv/Lib/site-packages/_distutils_hack/__init__.py 5.98KB

yolov5_quant/venv/Lib/site-packages/_distutils_hack/__pycache__/

yolov5_quant/venv/Lib/site-packages/_distutils_hack/__pycache__/ yolov5_quant/venv/Lib/site-packages/_distutils_hack/__pycache__/__init__.cpython-38.pyc 7.39KB

yolov5_quant/venv/Lib/site-packages/_distutils_hack/__pycache__/__init__.cpython-38.pyc 7.39KB

yolov5_quant/venv/Lib/site-packages/_distutils_hack/override.py 44B

yolov5_quant/venv/Lib/site-packages/_distutils_hack/override.py 44B

yolov5_quant/venv/Lib/site-packages/_virtualenv.pth 18B

yolov5_quant/venv/Lib/site-packages/_virtualenv.pth 18B

yolov5_quant/venv/Lib/site-packages/_virtualenv.py 5.63KB

yolov5_quant/venv/Lib/site-packages/_virtualenv.py 5.63KB

yolov5_quant/venv/Lib/site-packages/distutils-precedence.pth 151B

yolov5_quant/venv/Lib/site-packages/distutils-precedence.pth 151B

yolov5_quant/venv/Lib/site-packages/pip/

yolov5_quant/venv/Lib/site-packages/pip/ yolov5_quant/venv/Lib/site-packages/pip/__init__.py 357B

yolov5_quant/venv/Lib/site-packages/pip/__init__.py 357B

yolov5_quant/venv/Lib/site-packages/pip/__main__.py 1.17KB

yolov5_quant/venv/Lib/site-packages/pip/__main__.py 1.17KB

yolov5_quant/venv/Lib/site-packages/pip/__pip-runner__.py 1.41KB

yolov5_quant/venv/Lib/site-packages/pip/__pip-runner__.py 1.41KB

yolov5_quant/venv/Lib/site-packages/pip/__pycache__/

yolov5_quant/venv/Lib/site-packages/pip/__pycache__/ yolov5_quant/venv/Lib/site-packages/pip/__pycache__/__init__.cpython-38.pyc 604B

yolov5_quant/venv/Lib/site-packages/pip/__pycache__/__init__.cpython-38.pyc 604B

yolov5_quant/venv/Lib/site-packages/pip/__pycache__/__main__.cpython-38.pyc 564B

yolov5_quant/venv/Lib/site-packages/pip/__pycache__/__main__.cpython-38.pyc 564B

yolov5_quant/venv/Lib/site-packages/pip/_internal/

yolov5_quant/venv/Lib/site-packages/pip/_internal/ yolov5_quant/venv/Lib/site-packages/pip/_internal/__init__.py 573B

yolov5_quant/venv/Lib/site-packages/pip/_internal/__init__.py 573B

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/ yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/__init__.cpython-38.pyc 725B

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/__init__.cpython-38.pyc 725B

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/build_env.cpython-38.pyc 9.28KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/build_env.cpython-38.pyc 9.28KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/cache.cpython-38.pyc 8.98KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/cache.cpython-38.pyc 8.98KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/configuration.cpython-38.pyc 10.99KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/configuration.cpython-38.pyc 10.99KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/exceptions.cpython-38.pyc 22.81KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/exceptions.cpython-38.pyc 22.81KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/pyproject.cpython-38.pyc 3.52KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/pyproject.cpython-38.pyc 3.52KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/self_outdated_check.cpython-38.pyc 6.33KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/self_outdated_check.cpython-38.pyc 6.33KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/wheel_builder.cpython-38.pyc 8.92KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/__pycache__/wheel_builder.cpython-38.pyc 8.92KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/build_env.py 9.99KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/build_env.py 9.99KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cache.py 10.48KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cache.py 10.48KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/ yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__init__.py 132B

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__init__.py 132B

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/ yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/__init__.cpython-38.pyc 245B

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/__init__.cpython-38.pyc 245B

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/autocompletion.cpython-38.pyc 5.15KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/autocompletion.cpython-38.pyc 5.15KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/base_command.cpython-38.pyc 5.98KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/base_command.cpython-38.pyc 5.98KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/cmdoptions.cpython-38.pyc 22.6KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/cmdoptions.cpython-38.pyc 22.6KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/command_context.cpython-38.pyc 1.23KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/command_context.cpython-38.pyc 1.23KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/main.cpython-38.pyc 1.3KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/main.cpython-38.pyc 1.3KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/main_parser.cpython-38.pyc 2.92KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/main_parser.cpython-38.pyc 2.92KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/parser.cpython-38.pyc 9.69KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/parser.cpython-38.pyc 9.69KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/progress_bars.cpython-38.pyc 1.81KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/progress_bars.cpython-38.pyc 1.81KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/req_command.cpython-38.pyc 12.69KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/req_command.cpython-38.pyc 12.69KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/spinners.cpython-38.pyc 4.81KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/spinners.cpython-38.pyc 4.81KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/status_codes.cpython-38.pyc 324B

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/__pycache__/status_codes.cpython-38.pyc 324B

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/autocompletion.py 6.52KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/autocompletion.py 6.52KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/base_command.py 7.66KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/base_command.py 7.66KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/cmdoptions.py 28.69KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/cmdoptions.py 28.69KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/command_context.py 774B

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/command_context.py 774B

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/main.py 2.41KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/main.py 2.41KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/main_parser.py 4.24KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/main_parser.py 4.24KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/parser.py 10.56KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/parser.py 10.56KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/progress_bars.py 1.92KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/progress_bars.py 1.92KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/req_command.py 17.75KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/req_command.py 17.75KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/spinners.py 5KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/spinners.py 5KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/status_codes.py 116B

yolov5_quant/venv/Lib/site-packages/pip/_internal/cli/status_codes.py 116B

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/ yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/__init__.py 3.79KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/__init__.py 3.79KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/__pycache__/

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/__pycache__/ yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/__pycache__/__init__.cpython-38.pyc 3.06KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/__pycache__/__init__.cpython-38.pyc 3.06KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/__pycache__/install.cpython-38.pyc 19.58KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/__pycache__/install.cpython-38.pyc 19.58KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/cache.py 7.4KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/cache.py 7.4KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/check.py 1.65KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/check.py 1.65KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/completion.py 4.03KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/completion.py 4.03KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/configuration.py 9.58KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/configuration.py 9.58KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/debug.py 6.42KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/debug.py 6.42KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/download.py 5.17KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/download.py 5.17KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/freeze.py 2.88KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/freeze.py 2.88KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/hash.py 1.66KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/hash.py 1.66KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/help.py 1.11KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/help.py 1.11KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/index.py 4.65KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/index.py 4.65KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/inspect.py 3.29KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/inspect.py 3.29KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/install.py 30.98KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/install.py 30.98KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/list.py 12.05KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/list.py 12.05KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/search.py 5.56KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/search.py 5.56KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/show.py 5.99KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/show.py 5.99KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/uninstall.py 3.59KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/uninstall.py 3.59KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/wheel.py 7.22KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/commands/wheel.py 7.22KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/configuration.py 13.21KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/configuration.py 13.21KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/ yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__init__.py 858B

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__init__.py 858B

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/ yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/__init__.cpython-38.pyc 768B

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/__init__.cpython-38.pyc 768B

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/base.cpython-38.pyc 1.83KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/base.cpython-38.pyc 1.83KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/installed.cpython-38.pyc 1.22KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/installed.cpython-38.pyc 1.22KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/sdist.cpython-38.pyc 4.94KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/sdist.cpython-38.pyc 4.94KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/wheel.cpython-38.pyc 1.58KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/__pycache__/wheel.cpython-38.pyc 1.58KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/base.py 1.19KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/base.py 1.19KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/installed.py 729B

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/installed.py 729B

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/sdist.py 6.34KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/sdist.py 6.34KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/wheel.py 1.14KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/distributions/wheel.py 1.14KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/exceptions.py 20.45KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/exceptions.py 20.45KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/ yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__init__.py 30B

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__init__.py 30B

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/ yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/__init__.cpython-38.pyc 199B

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/__init__.cpython-38.pyc 199B

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/collector.cpython-38.pyc 14.9KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/collector.cpython-38.pyc 14.9KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/package_finder.cpython-38.pyc 28.35KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/package_finder.cpython-38.pyc 28.35KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/sources.cpython-38.pyc 7KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/__pycache__/sources.cpython-38.pyc 7KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/collector.py 16.12KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/collector.py 16.12KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/package_finder.py 36.71KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/package_finder.py 36.71KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/sources.py 6.4KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/index/sources.py 6.4KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/ yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__init__.py 17.14KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__init__.py 17.14KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/ yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/__init__.cpython-38.pyc 12.3KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/__init__.cpython-38.pyc 12.3KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/_distutils.cpython-38.pyc 4.68KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/_distutils.cpython-38.pyc 4.68KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/_sysconfig.cpython-38.pyc 6.08KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/_sysconfig.cpython-38.pyc 6.08KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/base.cpython-38.pyc 2.33KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/__pycache__/base.cpython-38.pyc 2.33KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/_distutils.py 6.15KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/_distutils.py 6.15KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/_sysconfig.py 7.68KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/_sysconfig.py 7.68KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/base.py 2.51KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/locations/base.py 2.51KB

yolov5_quant/venv/Lib/site-packages/pip/_internal/main.py 340B

yolov5_quant/venv/Lib/site-packages/pip/_internal/main.py 340B

yolov5_quant/venv/Lib/site-packages/pip/_internal/metadata/

yolov5_quant/venv/Lib/site-packages/pip/_internal/metadata/ yolov5_quant/venv/Lib/site-packages/pip/_internal/metadata/__init__.py 4.18KB